📰 Full Story

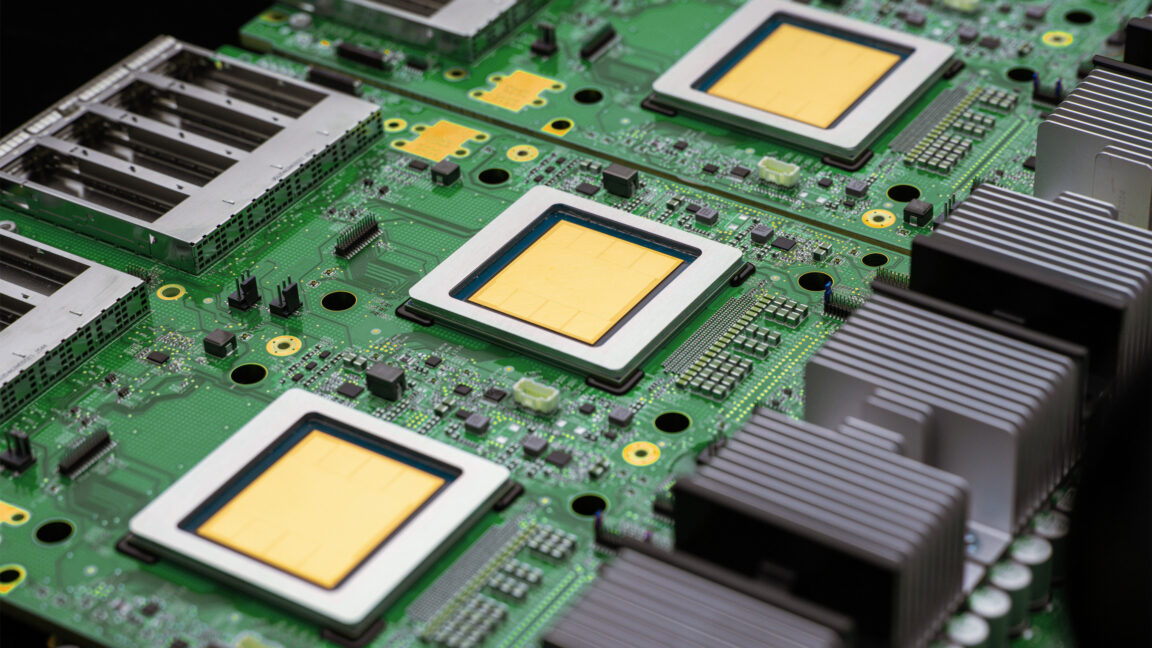

At Google Cloud Next in Las Vegas on April 22-23, 2026, Alphabet unveiled a major push across AI hardware and enterprise software: eighth-generation tensor processing units split into two purpose-built chips — TPU 8t for model training and TPU 8i for low-latency inference — and a unified Gemini Enterprise Agent Platform to build, run and govern AI agents.

Google touted up to roughly 3x faster training and an 80% improvement in performance-per-dollar for inference versus prior generations, larger on-chip SRAM on the inference part (cited at 384MB), and superpod and cluster scaling capabilities (superpods of ~9,600 chips and architectures intended to interconnect far larger fleets). Design partners named include Broadcom and MediaTek, with reports of talks with Marvell to diversify suppliers; customers and partners cited include Anthropic, Meta and national labs.

Google also announced tools such as Workspace Studio, Agent Designer, a 200+ model Model Garden (including Anthropic models), an A2A protocol for agent-to-agent communication, and a $750m fund to accelerate enterprise adoption.

Google said it will continue to offer Nvidia hardware and is collaborating on networking improvements.

💬 Commentary