📰 Full Story

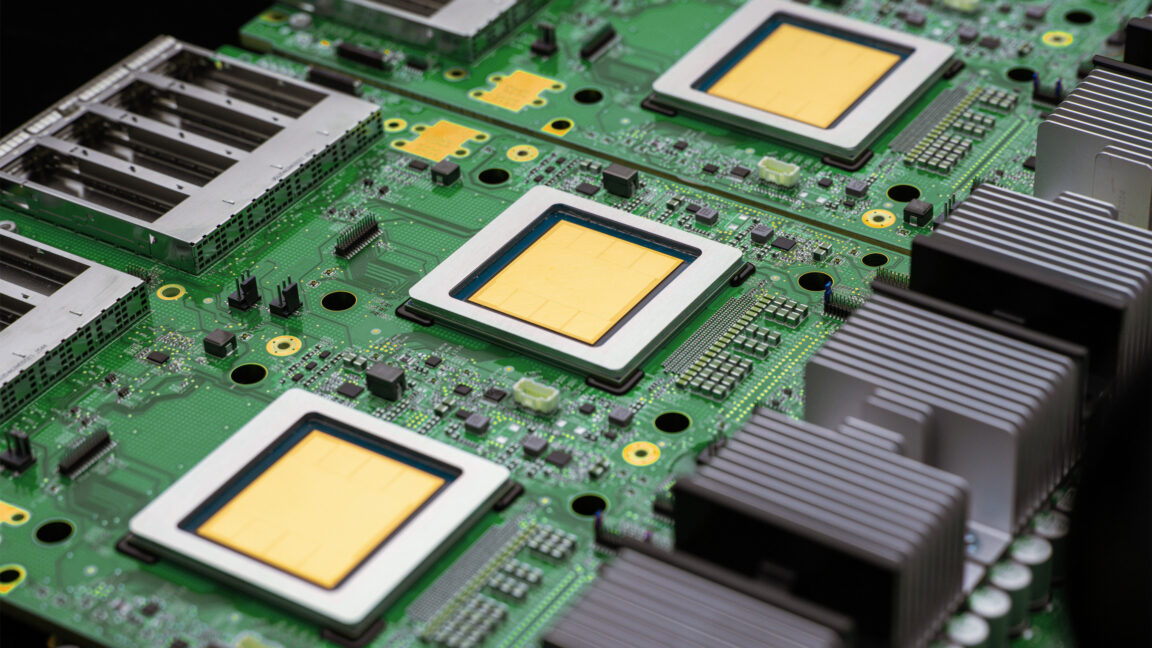

Alphabet’s Google Cloud on April 22 unveiled its eighth-generation tensor processing units (TPUs), splitting the line into two purpose-built chips: the TPU 8t for large‑scale model training and the TPU 8i for low‑latency inference and AI agents.

Google said 8t delivers roughly 2.8x the training throughput of the prior generation and can be clustered into 9,600‑chip pods with networking to scale to logical clusters of more than one million chips.

TPU 8i prioritises on‑chip memory and latency (including up to 384 MB SRAM and expanded HBM) and offers an estimated ~80% improvement in performance‑per‑dollar for inference.

Google also flagged infrastructure updates — new network topologies and storage paths — to support the chips, confirmed continued availability of Nvidia GPUs in its cloud, and highlighted partner design and supply relationships with Broadcom, MediaTek and potential talks with Marvell.

The systems and chips are due to be made generally available later this year.

💬 Commentary